I spend a lot of time worrying about the complexity of human technical systems.

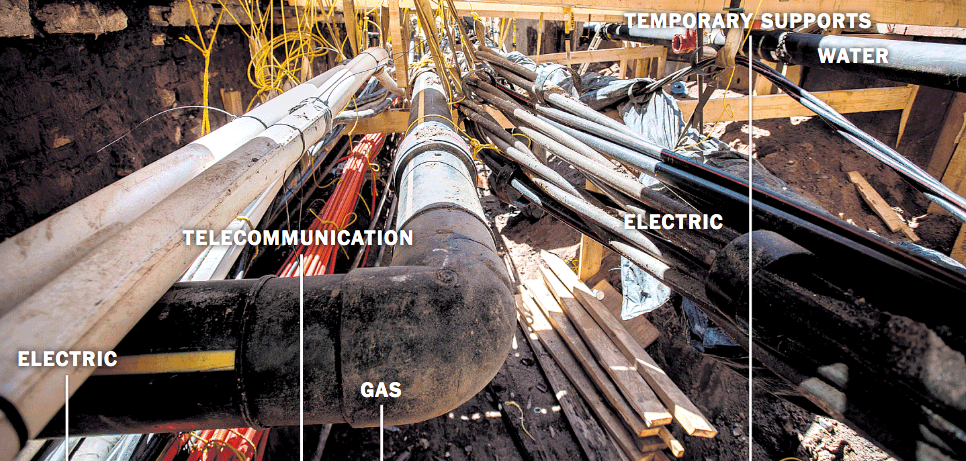

SURROUNDED BY OUR OWN COMPLEXITY

The concerns are mainly that they they are

- becoming so complex no-one individual or institution can control them

- they want they interlock creates a meta-system that is even further beyond control, since it literally has no-one managing it as such.

- the impacts and costs, the true state, of these systems are often rendered invisible

- the resources required to sustain these systems is unsustainable

- the lifestyles support by these systems is unsustainable

- to deal with these systems, ever more systems are added, which are themselves complex and unsustainable, and contribute even further to the emergent meta-system.

- the attitudes of individuals carried by these systems becomes more dependent on them, and less aware of the risks and costs they post, the more complex they get - because of the untractabifity and illegibility of the systems, which is a reinforcing feedback loop.

Separately from the last issue - a limit to human curiosity for complexity is - is the issue of normalization. We are quite well aware how complex the system is, but don't care because it just seems to work.

So many systems in life are now in hypercomplex mode.

- money: dynamics markets and pricing, monetary models including reserve banking, technical and legacy payments systems, assets and valuation models

- health: diagnosis, treatment, costing, materials, technology, data, imaging

- travel: land and nature division, construction and design engineering, materials, manufacturing and distribution, control systems, management and planning models

- food: farming animals and plants including inputs and machines, processing, supply chains and timing, distribution and packaging.

- telecoms: minerals and mining, technical manufacture of components/devices/infrastructure, cable-laying and radiofrequency relay and governance, data models and systems.

and more, through water, energy, cities and housing, education, media, governance, software and platforms, and other systems involved in modern living.

Each of these can fail in multiple ways and the combination of one or more of them, into a metasystem, is riven with hypercomplexity and unknown risk.

What should we think, feel, or do about any of this? Particularly if we feel, for example, that one more of the systems, and in particularly the metasystem, is set up in such a way to overtax natural resources, or damage nature, or exploit or harm a specific group of people or build up risks of harm into the future.

I worry, as I say. It seems hard to find ways to unpick individual systems, let alone the emerging metasystem, and propose something simpler. Even understanding the basic facts of any of it is astonishing.

Even if one can conceive of individual system alternatives, say for food production and consumption, society in most cases has become so inured to the current systems, and the individual system interlock and thus create a self-propelling metasystem - for example, how industrialized food production relates to healthcare and medicine - that change is very hard to plan in practice.

LEARNING TO TRUST TECHNICAL SYSTEMS

But there are some thoughts I have which enable at least deeper reflections and some calm.

When I go down the escalators into the Swedish underground system, can be fascinating journey of levels. First from ground floor to the first platform for the Green and Red Lines, then down to the previously deepest tunnels for the Blue Line, and finally down further to the new tunnels for the commuter line.

Descending an escalator into a tunnel to ride a train is a very physical metaphor of embedding oneself in a technical system. Each moment of descent is further commitment to and participation in - and reliance upon - the travel system and its technical infrastructure. And so it's a natural time to reflect on these systems and our relationship to them.

In the instances when I push back the normalization of the experience and try to sense the system-engagement experience in stages, I find a sense of increasing dread and overwhelm initially as I go deeper. What happens if it collapses? What happens if the lights go out? What happens if the trains break?

But gradually, the sense of embodiment in the system - being already part of it - and of its inertia - that it will not change - start of overtake any sense of dread, brought on by awareness of totality of engagement with the technical travel system.

And the question starts to arise in reverse. Is it not safer the deeper you go? Is not the rock harder and more stable? Has no-one planned for contingency and system failure? If there any way of doing what I am trying to do - travel by train - without such a system being in place? Were previous systems, and would alternative systems, be safer or more governable?

Stabilizing one's conception of systems complexity and safety, by reviewing the facts of the matter, and the counterfactual alternatives that may seem safer and more manageable in the moment but less so when confronted live, seems like a good practice.

And it raises awareness of the systems that have always been part of our lives, prior to the industrial-technical age, and which have tremendous complexity of their own.

For example: the body is a stupendously complex entity, which 'works' without, in most aspects, any external direction. The eyes and ears interpret the environment, the heart beats, the skin release heat and senses touch, cells respire and reproduce, food is processes and absorbed. We are no less 'embedded in' and reliant on the bodily systems of life than we are in the underground train systems on which we travel - in fact far more. And yet we spend less time worrying about them, at least in terms of 'system complexity', and how it will all 'keep working'.

Of course, the body is a production of billions of years of evolution, but that's not why we trust it. We trust it because we it seems we have no option. At least in the case of human systems we can adapt them directly - with the body, we basically cannot make a system adaptation.

Bodily systems are not the only hypercomplex environments which we humans have historically engaged with. Imagine a forest-dwelling hominid in a time before settled agriculture. What are they doing or feeling?

They are no less embedded in that environment than in they are in our bodies or we are in our underground systems. The rely on the tree canopy for shade, the undergrowth for shelter, the microclimate for heat, the weather for water and wind, and the supreme complexity of the ecosystem itself to supply plants, seeds, animals, furs, fibres, medicines, paints and whatever else their primitive economy can supply. Any one of these dimensions is subject to critical change: the forest environment is dynamic and volatile, and yet the human creatures are entirely reliant on it.

Here again the totality of reliance on a system that is beyond our control is revealed. If nothing else, we take from that this that our dependence on grand systems is not new. Nature, and bodies, are not obvious, and are less controlled, in their totality than human systems. So this is a source of potential calm, if not resolution, in relation to our unease with modern systems on which we rely: it's systems all the way down, and the further you go, the less control we have.

More precisely, it invites us to consider governs our actual relationship, psychologically, with systems, as human individuals.

THE EXPERIENCE OF COMPLEXITY

Firstly, there is risk, specifically risk of harm. At some level, all living is risk. There is a risk of bodily failure - illness or accident - at any point. And there is a environmental risk - a tree might collapse on you in a primordial forest, the food source might fail or spoil, you might be attacked by animals or other humans. Most of these are still risks directly, or replaced by close analogues in the modern context. And yet: is the risk of harm higher or lower in modern systems versus classical systems.

The risk might be divided into two types: isolated risk and system risk itself. For example, if we compared say an early railway system, to a modern underground system, it's clear there is less danger of the 'system' collapsing with the early railway, since the system is far less evolved. There were (let's say) no signals, locomotion electrification, tunnelling, onboard-systems of any sort: it was just a steam-train and a wagon on a track.

But: while the modern underground train has multiple potential points of system failure, we can observe that each item of the system may itself be safer, it not necessarily more robust. The steam-train might derail, the window glass might shatter (into lethal shards, before safety glass), the engine might explode, and so forth.

So, system failure is one kind of risk, but isolated risk is another kind of risk. The two ought to be compared in assessing what we are most concerned about: are we worried about the system per se, or the risks for ourselves in engaging with it?

Risk is not the only dimension through which we engage systems directly.

We also have direct senses of the system and its components. One idea to consider is that we do govern complex systems very effectively, through these surface experiences, both at any one moment and across time. If something makes an alarming sound, or smell, or looks alien in whatever way, we will reject it. And so it was, with any early system: humans were perhaps as suspicious as, say, cats investigating an new object or new space.

But as with cats, once the range of experiences that the object or space throws up is somewhat better understood, the sense of fear or threat subsides. We get calm, that is to say, not just because of some kind of familiarity and tuning out of a potential thread (which may also be in play) but because we learn over time, from basic senses and our native inductive reasoning, what the risk of a system may in fact be. Whether this direct sensing, and thus native risk assessment, is as reliable across bodies, ecosystems and underground trains is a question, but it's not the same as simply ignoring risk.

More profoundly, and speculatively, humans indeed the natural physical world may have some kinds of embedded intelligence that enable large systems to cohere, without overarching governance.

This certainly seems to be the case with bodies and with ecosystems. There are no ostensible levers in these systems: no-one actor is controlling them moment by moment, in fact it's hard to see many instances, except for dramatic ones, in which the whole system is being manipulated at once at all. And yet they exhibit all kinds of stability, and self-regulation.

Somehow this self-governance must be embedded in the elements of the system, and expressed at the overall level. What this is, for example how individual cells 'know' to combine into specific organs, is truly unknown.

But this unknown self-organization principle may have resonance everywhere, and for reasons that are truly hard, or impossible, for us to see. Is that so strange? That the the universe, even the newfangled elements and features of human infrastructural exhibit some kind of embedded intelligence or at least competence, that is fittedness for purpose?

This embedded intelligence doesn't in face have to be magical. The fact that so many phenomena in nature exhibit normal distributions over time, or even the theory of unidirectional time in entropy, are statistical artefacts of the world: it's harder for certain things to happen than others, given the numbers of things that must happen for that to be so. In the same way, by the sheer weight of probability, human systems if well designed and once established past their basics may have some of the same statistical stability, that will manifest as embedded intelligence over time.

Some of this embedded intelligence may simply be the inductive, experientially-grounded knowledge of system behaviour over time, shared among individuals.

For example, if the fundamental components of a system function within measured tolerances, then the system is may be safe enough, it may have buffer against total failure embedded, even if some, even many, failures may yet occur. This implies a hierarchy of safety such that, as long as the core doesn't cease functioning, there will be no full-system collapse. This doesn't per eliminate the risk that the core components will failure, but it does mean the largest risk in the system is not a result of complexity.

And relatedly, the signs of risk in those core components may be more detectable than others. As a hypothetical example, railway tracks are a core component of rail systems. And (this is conjecture to make the point), track health is easier to maintain and fault-detect than say the standard driver control interfaces. The tracks can be checked for all trains simultaneously too, while driver controls must be checked simultaneously. This implies that, even if driver control interfaces fail, the trains will not derail before emergency brakes kick in.

So: one possible instance of embedded intelligence, beyond anything statistical, that may emerge in human systems is, from long experience and without any explicit governing principle, a hierarchical approach to system design, which prevents multiple components failing in cycle, and which invites special levels of maintenance on the core. Humans maybe have a natural reflex to design around such 'failsafe' hiearchies, and to avoid feedback loops that damage them. It's hard to know how much embedded intelligence we really have, but that some of it is directed to systems design is not impossible to imagine.

COMPLEXITY OVER TIME

One assumption in thought and discussion around the hypercomplexity of human systems is that everything is getting more complex over time.

I wonder if this is so. In the example above, of a proto-human facing a jungle environment, the density of information required to assess risk and opportunity, the paucity of it, the speed of decision-making, the costs of failure, all seem to be overwhelmingly greater than the case of a modern human using a mobile phone on a train.

In part, the major difference is that the further back you through systems that humans have confronted to survive and thrive, the closer you get to systems where, not only is there no safety engineering, the 'system' - that is the natural environment - is actually trying to kill you. One thing we may comfortably say, when we buy a train ticket, or use a mobile phone, is that the the train and phone do not have as goals an intention to kill, in the way that any number of forces and actors in a primordial forest might.

So, then, what about in pre-industrial, but post-technical times: before mass industry and complex technical geoinfrastructures, but in eras where human cities and institutions were evolved.

In European, for example Roman or Byzantine, societies, or classical Persian or Chinese contexts, historians tell us the institutional complexity was immense. While this is not precisely the same as pure technical system, it has a meaningful parallel in that many human institutions were indeed precursors of modern technical replacements, and just as much part of the successful functioning and risk management of the whole society.

Is there evidence to suggest that either the total complexity, in a quantitative sense, or risk, in terms of scale of potential harm, were any less in regards to an individual embedded within them? It's hard to say, and feels suitably like a middle ground between totally un-made systems with hostile elements and lack of risk engineering, and the modern scenario of risk-managed, enemy-free but hypercomplex systems.

Systems may get more complex, but they may also have better architectures over time. Beyond the pure hierarchy of system elements, and the hardening of the core systems, and avoidance of feedbacks, characteristic features of system robustness can naturally emerge over time. Two obvious ones are:

- modular or nodal subsystems that do not send negative feedbacks to each other (or core/root system elements

- modular governance whereby the maintenance and monitoring of the system is broken in modular clusters so that cultural and operational failings in any one system governance model are not automatically replicated in any other system.

THE META-SYSTEM & THE LIMITS OF HUMAN IMMERSION

While the analysis above invites to me, at least, some reduction in concern about the risks of complex human systems, it doesn't resolve a couple of foundational worries - which maybe are the core concerns in the first place.

The meta-system, where many systems intersect, is not itself a designed system: it's simply an emergent phenomenon that may have none of the embedded intelligence I have suggested might exist in the other systems. It might just be a bank of latent risks, whereby unexpected behaviours on one system, which are not inherently disruptive in its own system, are hugely disruptive in an another. An example of this would be: mobile phone cell reception disrupting radiocommunications in aviation. Or: a pandemic which requires that schools stay closed, leading to extra and unsustainable pressure on hospital workers, who are both required in extra numbers on the wards and to care for their children who are now necessarily home from school.

Finally, the major risk of modern systems may simply be the load on human cognition. Even if we were to say that natural systems and pre-modern system environments were as complex as modern systems, it's still definitely possible, particularly when confronted with navigating a meta-system and its instabilities and risks, that humans feel overwhelmed by complexity and in particular change.

This is real risk, and ironically a reversed one: the danger of a system may not be the risk that it collapses and fails to serve the humans using it, but that humans needed to use and maintain the systems for everyone else get overwhelmed and unable to deal with it. There's some signs of this, specifically, in the case of certain avionics computers. And perhaps signs of it at the political level as formal regulatory and operational governance at nation-scale seems unable to keep up with the pace of change of technology.